December 22, 2022 - by Simone Ulmer

The declared goal of the Square Kilometre Array (SKA) is nothing less than revolutionising our understanding of the universe and its fundamental physical laws. Since January of this year, Switzerland has been a member of the SKA Observatory (SKAO) with the SKA Switzerland Consortium (SKACH) and thus shares in this ambitious SKA goal as well as personal ones. Switzerland's membership is intended to benefit both research and industry, so the CSCS research infrastructure is one of the facilities available to different research groups for data provision and data processing. (More information >)

The radio telescope — which, according to the consortium, will be one of the largest scientific facilities ever constructed — is being built at two locations: South Africa and Australia. In South Africa, 197 dish antennas will receive medium to high radio frequencies; and in Australia, about 131,000 stationary antennas will receive low-frequency radio signals. All these antennas in total will have a radio signal collecting area of one square kilometre.

Deciphering the origin of the universe

In recent years, modern radio telescopes such as the MeerKAT telescope in South Africa, which will be part of SKA, have already succeeded in identifying unexpected galaxies and structures in the universe. With the data collected by SKA, the researchers even hope to get a glimpse back into the evolutionary history of the universe and see how stars or galaxies form, why planets develop around stars, or what dark energy is and what role it plays in the evolution of the universe.

To put this in perspective, the Large Hadron Collider at CERN produces around 1 petabyte of data per year that is distributed to various computing centres and research groups. This will also be the case at the SKA — but with 600 times more data. That is why the scientists and data specialists are already working flat out on procedures for optimally processing the enormous volume of information.

Converting the data collected by SKA into images is also a particular challenge, because the received signals are incomplete due to the gaps between the antennas. These gaps create artificial noise artefacts that result in a "dirty image", explains Shreyam Krishna, a PhD student at the Laboratory of Astrophysics at EPFL. Krishna is part of the research group led by Jean Paul Kneib, professor of astrophysics at EPFL, and Emma Tolley, group leader at EPFL's Scientific IT & Application Support (SCITAS) platform, who are spearheading the Next-Generation Radio Interferometry project through PASC (Platform for Advanced Scientific Computing). One of the goals of this PASC project is to develop algorithms that quickly and reliably process the enormous amounts of SKA data and calculate a clearer picture of the received signals from these dirty images.

Turning radio signals into images

A mathematical method known as the Fourier Transform makes it possible to transform the registered signals into complete images. To put it simply, the Fourier Transform does the same work as a prism when it converts white light into its constituent colours. The challenge with SKA, though, is how to get from the incomplete signal recording to the actual image, Krishna explains. Unlike with optical telescopes, for example, where it is the diffraction of light that blurs the image, with SKA there are also the artificial noise artefacts that make it difficult to calculate and reconstruct the image, even with the help of supercomputers like CSCS’s "Piz Daint" or its successor "Alps".

For calculating a single image from the different antennas, researchers utilise interferometry. The method uses algorithms of the so-called CLEAN family that have been used for about 30 years. The algorithms calculate out the blurs and turn the dirty image into a sharp image. It does this by assuming that the intensity distribution of radio sources in the sky is a collection of so-called point sources. In order to obtain a cleaned-up image from the dirty image, the distortions of the point sources are repeatedly recalculated and subtracted from the dirty image until it, or the point source, is sharp.

Step-by-step approach

"You have to identify source after source across the whole image and subtract their contribution from the dirty image to keep building the model across the whole sky," says Tolley. Depending on the telescope, this has typically required 1,000 to several thousand iterations. However, telescopes as large as the SKA and its much larger data rates increase the iterations per image to at least tens of thousands and up to a few million iterations. After all, if SKAO is fully operational by 2030, as planned, the researchers expect a terabyte of data per second. This deluge of data can no longer be handled by conventional algorithms within a reasonable period of time, the researchers say.

The EPFL Center for Imaging has developed a new algorithm, called Bluebild, which can image the sky more accurately and more efficiently than CLEAN. "We try to find a good balance between the best possible representation and a good time to solution," says Tolley. To this end, the EPFL researchers in PASC are working together in an interdisciplinary manner with specialists from the fields of image processing, astronomy and radio astronomy, as well as Big Data. The aim of the collaboration is, first, to optimise the Bluebild algorithm so that it efficiently solves the problem of the incomplete yet enormous data collection; and second, to one day convert the received data into images in real time. "The fact that we combine all these different synergies to try to get this algorithm to work the way we want — not just to get the algorithm, but to get it to the point that it is usable widely in the community and it executes within different computing infrastructures — is an interesting and powerful aspect," says Krishna.

"The trick with Bluebild is what's called eigenvalue decomposition," says Tolley." Which means, even before you do any imaging, you split up your entire signals according to brightness of the different sources in the sky." In other words, all the light sources are sorted from very bright to medium to less intense sources. "The eigenvalue decomposition allows us to isolate these different sources in different flux levels in one go. Just by tuning the signal a little bit, we remove 5 percent of the data, so we automatically filter out the noise," Krishna adds.

But the method has another advantage: by sorting the light sources according to strength, it is possible to see very weak sources that were previously covered by strong light sources. "This eigenvalue decomposition gives us access to scientific cases that were previously inaccessible," Tolley explains.

Inconceivable amounts of data

If the SKAO goes into full operation as planned in 2030, it is expected that over a period of about 50 years, data in the order of magnitude of roughly 10 exabytes will be generated. One exabyte (EB) is equal to ten to the power of 18 = 1,000,000,000,000,000 bytes. Processing and storing the data is a challenge that Bluebild aims to better address. While researchers in PASC are optimising Bluebild with the support of CSCS software developers, CSCS is also preparing everything to make portions of the generated data available for independent research.

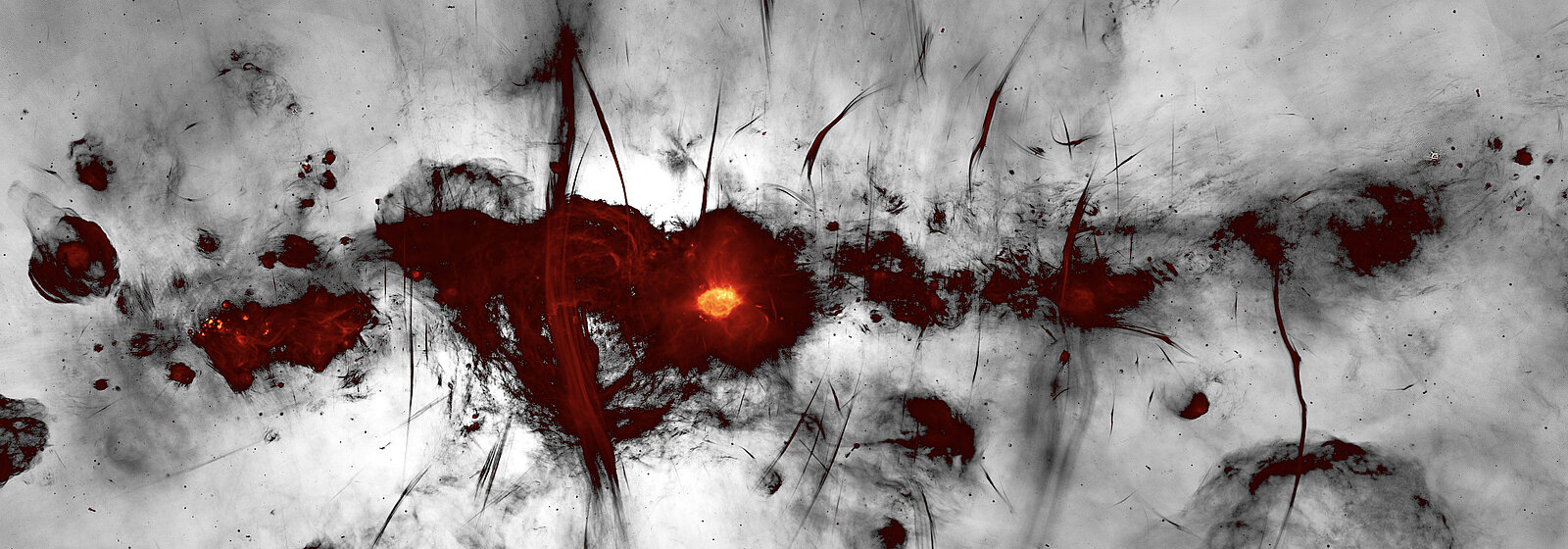

Image above: The new MeerKAT image of the Galactic Centre region of the Milky Way is shown with the galactic plane running horizontally across the image. Many new as well as previously-known radio features are evident, including supernova remnants, compact star-forming regions, and the large population of mysterious radio filaments. The broad feature running vertically through the image is the inner part of the (previously discovered) radio bubbles, spanning 1400 light-years across the centre of the galaxy. Colours indicate bright radio emission, while fainter emission is shown in greyscale. (Image: I. Heywood, SARAO)

This article may be used on other media and online portals provided the copyright conditions are observed.